By Andrew Neale

Last year I gave a talk at the First Forensic Forum about the cloud and how it can be used for nefarious means.

What came as a surprise to me was how little the forensic practitioners there knew about the Cloud, Cloud service providers and all the new technology out there in this rapidly evolving ecosystem.

I got the occasional “I’ve looked at s3 to store some data” but that’s it. None of them were aware of how CSPs hold data and how immutable infrastructure can be, and with immutable systems data can disappear, quickly.

Computer Forensics is often about going through data sets or audit trails and finding evidence of a crime or activity by an individual or a company has committed.

Normally this would be done by going through a Desktop PC, laptop or checking servers. This still very much applies, the world is still dominated by local storage, and if it’s local it can be imaged and searched. But the public cloud has arrived, and with it a whole load of new complications for Forensics Investigators.

The Cloud used to be something new and small off in the distance but now in 2019 majority of companies are either moving to the cloud, on the cloud and thinking about moving to the cloud, with users often using some sort of cloud backed storage solution.

So, what now for Forensic Investigators? What do you do when you come across cloud-based solutions? Do all forensics investigators need cloud knowledge? In short, yes. But different areas will require different levels of cloud knowledge.

Investigations as Incident response for a company

Let’s start by looking at being a Forensic Investigator embedded into an Incident response team for a company who have fully embrace the Cloud and have a sizeable Cloud presence. In this instance you would need decent cloud knowledge of their chosen CSP. With this case as well, you might not only need to know how their cloud is built but how to talk it apart to prevent incidents from spreading. And in other cases, you might end up building their incident response platform to enable you to do your job quickly and effectively.

Here’s a great talk done by Ben Potter at AWS re:Invent 2017

What you should take note here is that AWS have provided the tools necessary, but it is up to you or a cloud engineer stitch them together.

Doing an investigation into a security event or forensically analysing instances and data could be manually intensive and time consuming, with steps being missed and the possibility of losing critical data to the investigation.

This example isn’t truly what you would encounter in the wild, as you would be hired for the role and generally would be expected to have some cloud knowledge.

Let’s take a look what it would be like being a Forensic Investigator on the outside of the cloud world.

Investigations as an Outside Forensic investigator

Extra challenges it presents

You’re a Forensic Investigator who has been asked to investigate a person, this person has been using a public cloud provider in some aspect (and thankfully you have credentials).

Where do you start? Do you log straight in? What if by logging in you trigger a rule on that person’s cloud account? Was this person technical enough in the first place to build out the automation?

For this example, let’s make the assumption that this person is technical enough to use the cloud but not too its full potential with zero automation.

As with any investigation preservation of data and audit trails are paramount but so is the gathering of evidence.

You have logged into the persons cloud account, but all you can see is the many different types of cloud services they offer, it’s a lot to take in, where would you even start? Depending on your own cloud knowledge, I would start with looking over their data storage solutions to see what they are using and what possible data you could get out. This could be done two ways:

- Go to each data service and check through each dashboard to see what is there, for instance if you take AWS as an example you would check s3 to see how many/if they have buckets and if they are encrypted, from there you would check EBS to see if they have any root volumes/external storage volumes. Next move onto RDS and see if they might be running any databases. Depending on which of these you find would formulate your data gathering exercise.

- The other option does require more technical cloud knowledge. What you could do is if there are access keys present (or you want to create some, but that would mean alerting the account, proceed with caution) is using the CSPs cli tools to query all their data solutions from a script and output the findings to a report, it’s quicker and can be replicated to other investigations as needed.

But there are key pieces of CSP’s own security measure you need to be aware of. You can’t see the root VM’s and their volumes, you won’t be able to see network or server logs beyond what was provisioned. The public cloud is built on a shared tenancy systems, first and foremost the CSPs will protect other clients systems and data through isolation.

Then it’s can you recover deleted data?

More often than not with most of CSP’s data solutions it’s not possible, as we can see from an AWS security white paper:

“When an object is deleted from Amazon S3, removal of the mapping from the public name to the object starts immediately, and is generally processed across the distributed system within several seconds. Once the mapping is removed, there is no remote access to the deleted object. The underlying storage area is then reclaimed for use by the system.”

With the speed that data enters AWS environments there is a good chance the system has already claimed that and overwritten any useful information that may have been left behind.

What the future will bring and the need to adapt rapidly

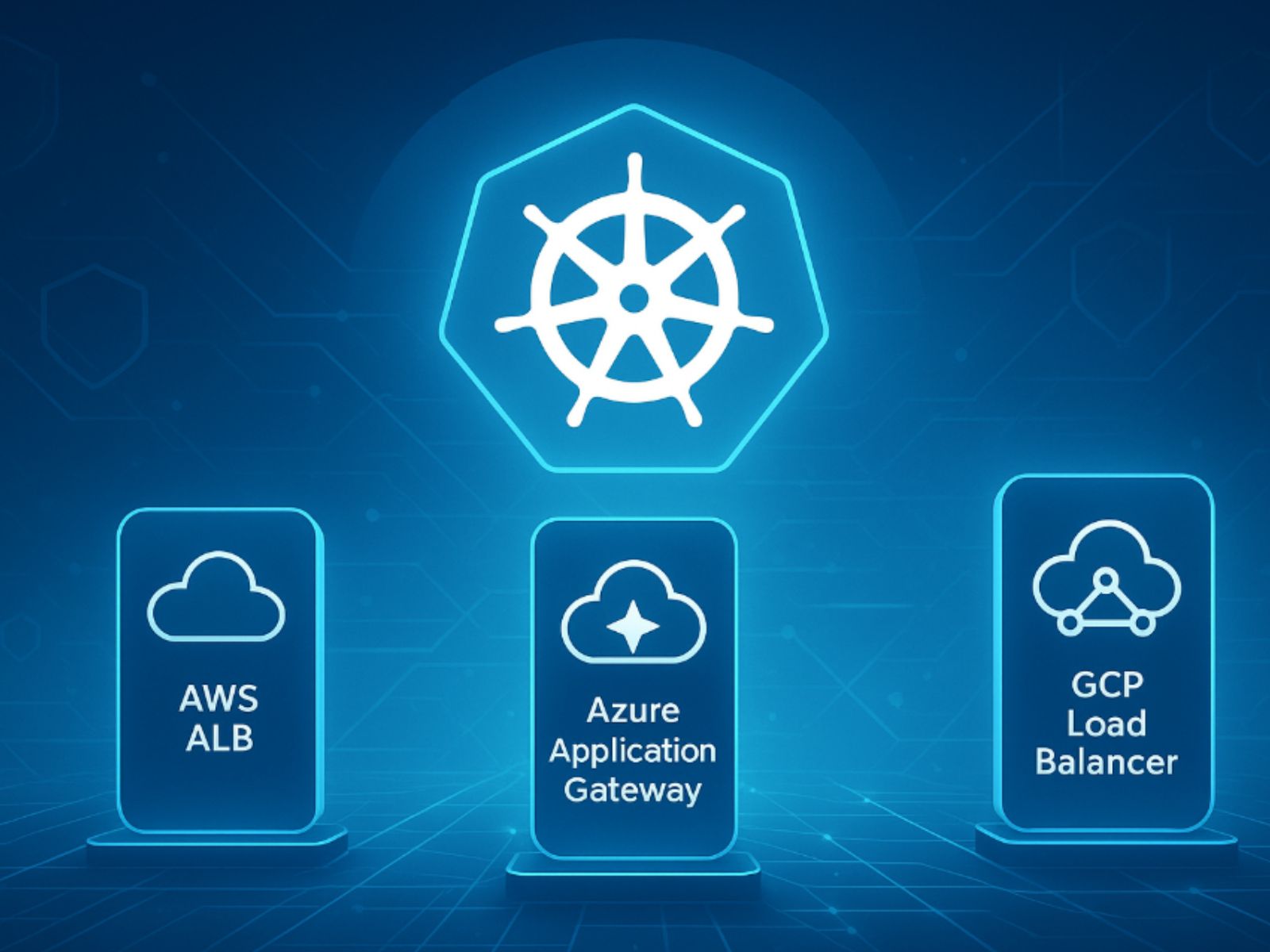

Hopefully as forensic investigator you have seen the need to start embracing the cloud and making moves towards learning basic about the most common CSP’s (AWS, Azure, IBM and GCP).

What you need to be prepared for is the rapid pace of change and evolution of cloud services, you may have good knowledge 12 months ago about a certain service, but it can change several times a year by adding/modifying functionality.

It may not be this year or next year but soon people will move on beyond cloud solution created by companies (oneDrive, google drive, dropbox) to making their own storage solutions in the cloud, for cheaper and even more control. There are already many GitHub open repos with apps that already do this for you.

The public cloud is here, are you prepared enough to investigate it?